One of the big debates around Chinook is whether or not it is Artificial Intelligence (“AI”). IRCC’s position has been that Chinook is not AI because there is a human ultimately making decisions.

In this piece, I will show how the engagement of a human in the loop is a red herring, but also how the debate skews the real issue that automation, whether for business function only or to help administer administrative decision, can have adverse impacts – if unchecked by independent review.

The main source of my argument that Chinook is AI is from IRCC itself – the Policy Playbook on Automated Support on Decision-Making 2021. This an internal document, which has been updated yearly, but likely captures the most accurate ‘behind the scenes’ snapshot of where IRCC is heading. More on that in future pieces.

AI’s Definition per IRCC

The first, and most important thing is to start with the definition of Artificial intelligence within the Playbook.

The first thing you will notice is that the Artificial Intelligence is defined so broadly by IRCC, which seems to go against the narrow definition it seems to paint with respect to defining Chinook.

Per IRCC, AI is:

If you think of Chinook dealing with the cognitive problem of attempting to issue bulk refusals – and utilizing computer science (technology) – to apply to learning, problem solving and pattern recognition – it is hard to imagine that a system would even be needed if it weren’t AI.

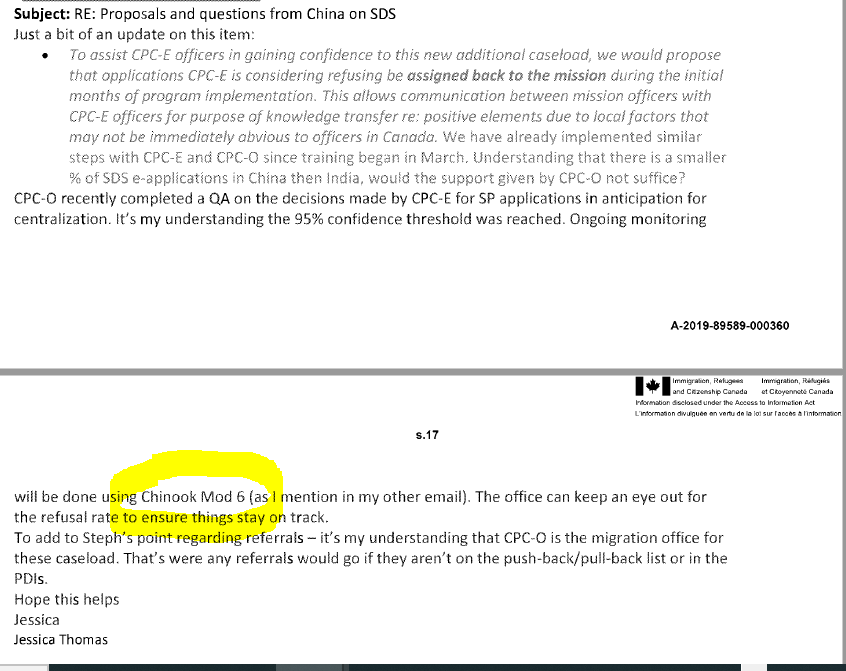

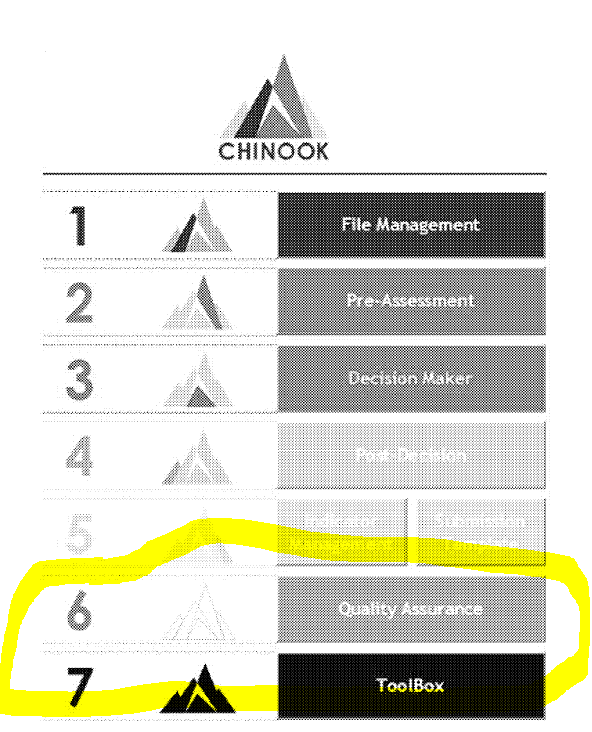

Emails among IRCC, actively discuss the use of Chinook to monitor approval and refusal rates utilizing “Module 6”

Looking at the Chinook Module’s themselves, Quality Assurance (“QA”) is built in as a module. It is hard to imagine a QA system that looks at refusal and approval rates, and automates processes and is not AI.

As this article points out:

Software QA is typically seen as an expensive necessity for any development team; testing is costly in terms of time, manpower, and money, while still being an imperfect process subject to human error. By introducing artificial intelligence and machine learning into the testing process, we not only expand the scope of what is testable, but also automate much of the testing process itself.

Given the volume of files that IRCC is dealing with, it is unlikely that the QA process relies only on humans and not technology (else why would Chinook be implemented). And if it involves technology and automation (a word that shows up multiple times in the Chinook Manual) to aid the monitoring of a subjective administrative decision – guess what – it is AI.

We also know also that Chinook is underpinned with ways to process data, look at historical approval and refusal rates, and flag risks. It also integrates with Watchtower to review the risk of applicants.

It is important to note that even in the Daponte Affidavit in Ocran that alongside ATIPs is the only information we have about Chinook, the focus has always been on the first five modules. Without knowledge of the true nature of something like Module 7 titled ‘ToolBox’ it is certainly premature to be able to label the whole system as not AI.

Difficult to Argue Chinook is Purely Process Automation Given Degree of Judgment Exercised by System in Setting Up Findecs (Final Decisions)

Where IRCC might be trying to carve a distinction is between process automation/digital transformation and automated decision support systems.

One could argue, for example, that most of Chinook is process automation.

For example, the very underpinning of Chinook is it allows for the entire application to be made available to the Officer in one centralized location, without opening the many windows that GCMS required. Data-points and fields auto populate from an application and GCMS into a Chinook Software, allowing the Officer to render decisions easier. We get this. It is not debatable.

But does it cross into automated decision support system? Is there some degree of judgment that needs to be applied when applying Chinook that is passed on to technology that would traditionally be done by humans.

As IRCC defines:

The Chinook directly assists an Officer in approving or refusing a case. Indeed, Officers have to apply discretion in refusing, but Chinook presents and automates the process. Furthermore, it has fundamentally reversed the decision-making processing, making it a decide first, justify later approach with the refusal notes generator. Chinook without AI generating the framework, setting up the bulk categories, automating an Officer’s logical reasoning process, simply does not exist.

These systems replace the process of Officer’s needing to manually review documents and render a final decision, taking notes to file, to justify their decision. It is to be noted that this is still the process at low volume/Global North visa offices where decisions do this and are reflected in the extensive GCMS notes.

In Chinook, any notes taken are hidden and deleted by the system, and a template of bulk refusal reasons auto-populate, replace, and shield the actual factual context of the matter from scrutiny.

Hard to see how this is not AI. Indeed, if you look at the comparables provided – the eTA, Visitor Record and Study Permit Extension automation in GCMS, similar automations with GCMS underpin Chinook. There may be a little more human interaction, but as discussed below – a human monitoring or implementing an AI/advanced analytics/triage system doesn’t remove the AI elements.

Human in the Loop is Not the Defining Feature of AI

The defense we have been hearing from IRCC is that there is a human ultimately making a decision, therefore it cannot be AI.

This is obscuring a different concept called human-in-the-loop, which the Policy Playbook suggests actually needs to be part of all automated decision-making processes. If you are following, what this means is the defense of a human is involved (therefore not AI), is actually a key defining requirement IRCC has placed on AI-systems.

It is important to note that there is certainly is a spectrum of application of AI at IRCC that appears to be leaning away from human-in-the-loop. For example, IRCC has disclosed in their Algorithmic Impact Assessment (“AIA”) for the Advanced Analytics Triage of Overseas Temporary Resident Visa (“TRV”) Applications that there is no human in the loop with the automation of Tier 1 approvals. The same system without a human-in-the-loop is done for automating eligibility approvals in the Spouse-in-Canada program, which I will write about shortly.

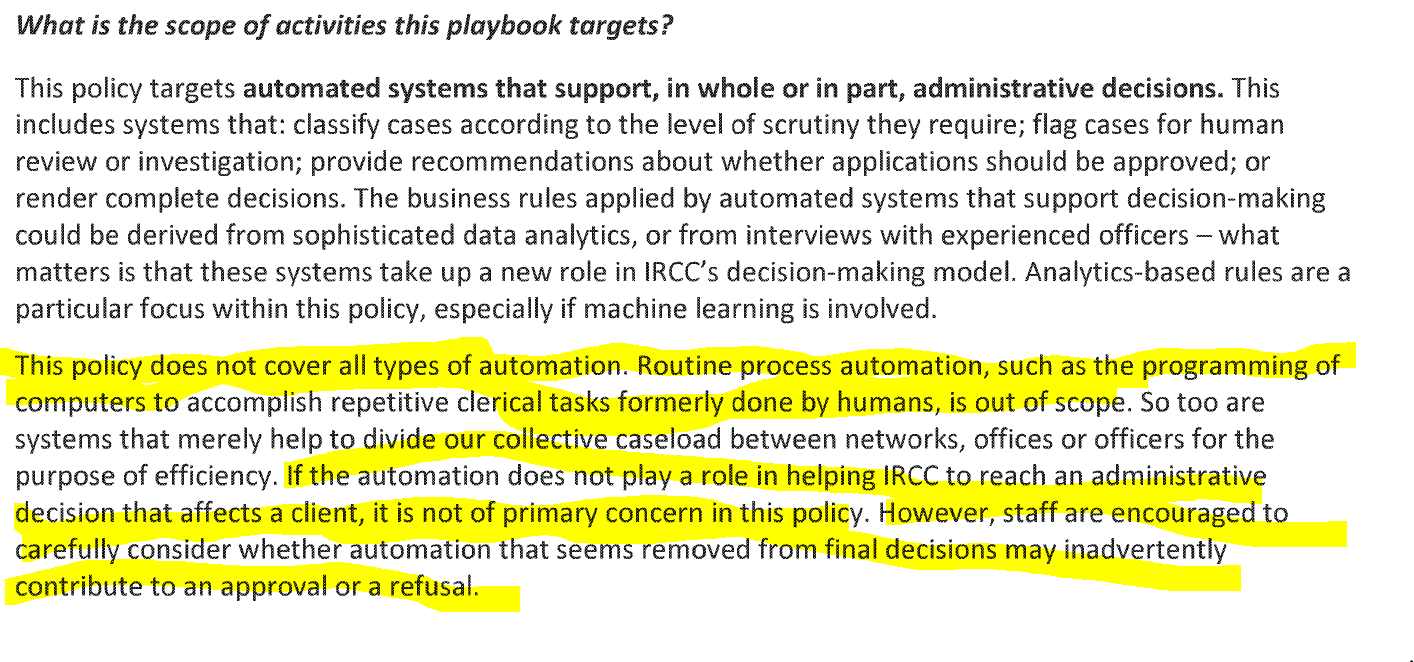

Why the Blurred Line Between Process Automation and Automated Decision-Making Process Should Not Matter – Both Need Oversight and Review

Internally, this is an important distinguishing characteristic for IRCC because it appears that at least internal/behind-the-scenes strategizing and oversight (if that is what the Playbook represents) applies only to automated decision-support systems and not business automations. Presumably such a classification may allow for less need for review and more autonomy by the end user (Visa Officer).

From my perspective, we should focus on the last part of what IRCC states in their playbook – namely that ‘staff should consider whether automation that seems removed from final decisions may inadvertently contribute to an approval or a refusal.’

To recap and conclude, the whole purpose of Chinook is to be able to render the approval and refusal in a quicker and bulk fashion to save Officer’s time. Automation of all functions within Chinook, therefore, contribute to a final decision – and not inadvertently but directly. The very manner in which decisions are made in immigration shifts as a result of the use of Chinook.

Business automation cannot and should not be used as a cover for the ways that what appear routine automations actually affect processing that would have had to be done by humans, providing them the type of data, displaying it on the screen, in a manner that can fetter their discretion and alter the business of old.

That use of computer technology – the creation of Chinook – is 100% definable as the implementation of AI.